Are you struggling to understand complex machine learning models? Explainable AI unlocks the black box, giving you transparent, actionable insights to build highly trustworthy ML systems safely.

This comprehensive guide explores the essential components of Explainable AI. You will learn actionable implementation strategies, powerful frameworks, and proven techniques. Discover how to enhance compliance, boost user trust, and avoid critical mistakes when developing transparent machine learning ecosystems.

Understanding Explainable AI: The Foundation of Trust

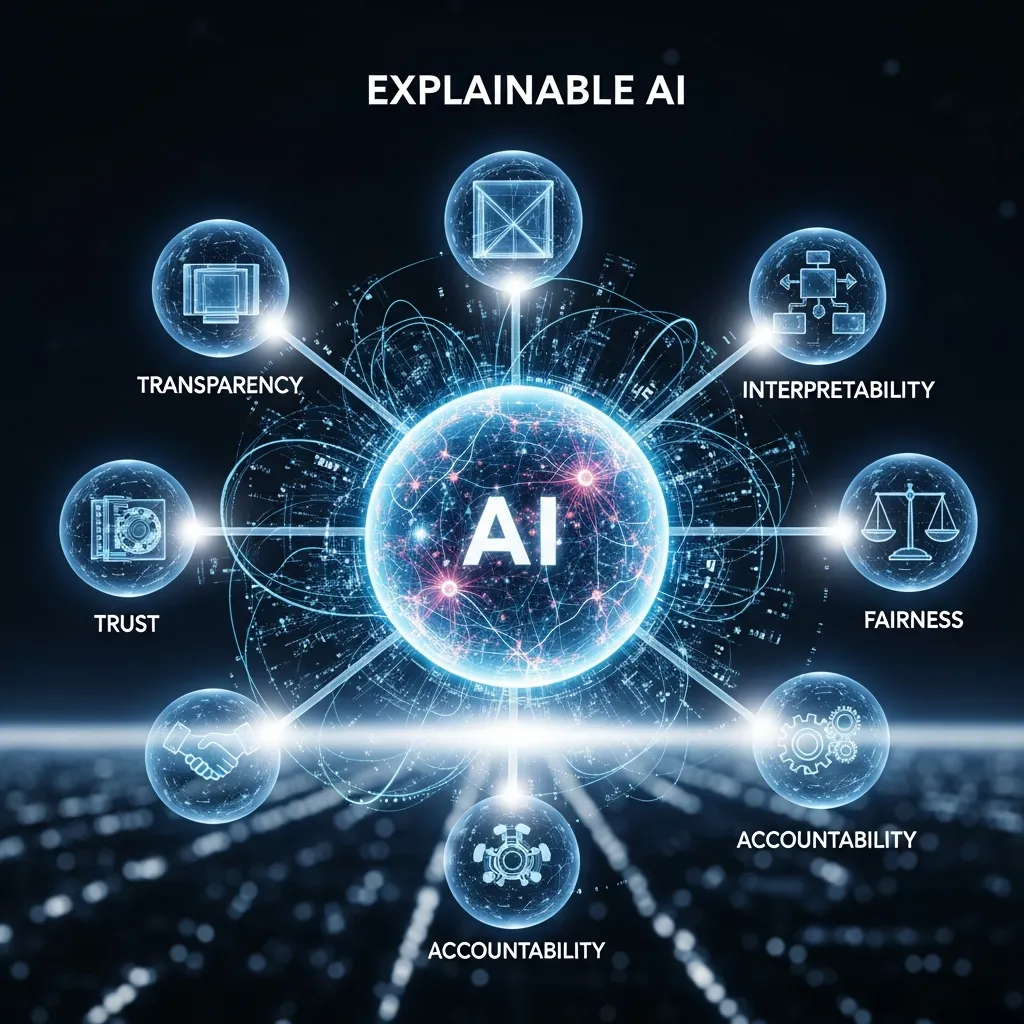

As artificial intelligence rapidly transforms every major industry, developers face a persistent challenge. Modern machine learning models, particularly deep neural networks, operate as highly complex black boxes. They ingest massive amounts of data and output remarkably accurate predictions, but they completely obscure the reasoning behind those predictions. Explainable AI emerges as the critical solution to this exact problem. It refers to the specific tools, frameworks, and methodologies that allow human users to comprehend and trust the results generated by machine learning algorithms.

When you implement Explainable AI, you transform opaque algorithms into transparent, interpretable systems. This transparency proves essential for identifying algorithmic biases, ensuring strict regulatory compliance, and fostering long-term user confidence. If a medical diagnosis system recommends a high-risk surgery, doctors cannot blindly follow a black box. They need Explainable AI to understand exactly which patient symptoms drove the recommendation. Similarly, when financial institutions deny a mortgage application, regulators demand clear, logical reasoning rather than a simple mathematical output.

By integrating Explainable AI directly into your machine learning pipelines, you empower stakeholders to audit decisions effectively. You establish clear accountability, align your technology with human values, and mitigate the severe financial and reputational risks associated with automated errors. Explainable AI shifts the narrative from blind faith in technology to verified, understandable, and collaborative intelligence.

Mini-Conclusion: Establishing Systemic Transparency

Grasping the core philosophy of Explainable AI helps organizations build resilient infrastructures. By prioritizing transparency from day one, you establish a powerful technological foundation that users and regulators can genuinely trust.

Why Explainable AI Drives Modern Innovation

The push for Explainable AI extends far beyond simple technical curiosity. It acts as a primary catalyst for safe, sustainable technological innovation. As governments worldwide introduce strict regulations governing automated decision-making, Explainable AI becomes a mandatory business requirement rather than an optional feature.

First and foremost, Explainable AI guarantees regulatory compliance. Authorities globally now enforce stringent data protection and fairness laws. For example, the General Data Protection Regulation requires companies to provide users with meaningful information about the logic involved in automated decisions. Explainable AI provides the exact mechanisms necessary to generate these required explanations. If regulators audit your lending algorithm, Explainable AI allows you to prove mathematically that your system does not discriminate against protected classes.

Furthermore, Explainable AI accelerates model improvement and debugging. Data scientists cannot fix models they do not understand. When a model performs poorly on a specific demographic, Explainable AI highlights the exact features causing the error. Developers use this precise feedback to retrain the model, improve its underlying dataset, and optimize its overall performance. This continuous feedback loop drastically reduces development time and resources.

Finally, Explainable AI drives aggressive user adoption. People inherently distrust systems they cannot comprehend. When you use Explainable AI to show a customer exactly why they received a specific product recommendation or pricing tier, you build massive consumer loyalty. Transparency breeds trust, and trust accelerates market penetration.

Mini-Conclusion: The Strategic Advantage of Transparency

Explainable AI fundamentally transforms compliance burdens into massive strategic advantages. Organizations that master these transparent systems innovate faster, secure regulatory approval effortlessly, and dominate market share through unparalleled user trust.

Core Techniques Powering Explainable AI

Building trustworthy machine learning models requires applying specific, highly effective Explainable AI techniques. Developers categorize these methods primarily into two distinct groups: ante-hoc techniques and post-hoc techniques.

Ante-hoc Explainable AI involves using inherently interpretable models right from the beginning. Decision trees, linear regression models, and generalized additive models fall into this category. Because their mathematical structures remain simple, humans can easily track how inputs translate into outputs. However, these simpler models often sacrifice predictive accuracy when dealing with highly complex, non-linear datasets like high-resolution images or dense natural language text.

To handle complex deep learning models, developers rely heavily on post-hoc Explainable AI techniques. You apply these techniques after the black-box model makes its prediction.

- SHAP (SHapley Additive exPlanations): SHAP represents a game-theoretic approach to Explainable AI. It calculates the exact contribution of each individual feature to a specific prediction. If an algorithm flags a transaction as fraudulent, SHAP visually displays how much the transaction amount, location, and time contributed to that specific flag.

- LIME (Local Interpretable Model-agnostic Explanations): LIME operates by creating a simplified, interpretable model locally around a specific prediction. It subtly alters the input data and observes how the black-box model’s predictions change. This technique helps developers understand the model’s behavior for individual cases quickly and efficiently.

- Counterfactual Explanations: This Explainable AI method tells the user exactly what inputs need to change to reverse a decision. Instead of simply saying “Loan Denied,” counterfactual Explainable AI states, “If your annual income were $5,000 higher, the system would approve your loan.” This provides highly actionable feedback.

- Saliency Maps: Used primarily in computer vision, saliency maps highlight the specific pixels an image recognition model focused on to make a classification. If an AI diagnoses a tumor from an X-ray, the saliency map highlights the exact visual anomalies that triggered the diagnosis.

Mini-Conclusion: Selecting the Right Tools

Mastering these core Explainable AI techniques empowers your development team to tackle any transparency challenge. By matching the appropriate technique to your specific model architecture, you guarantee continuous, crystal-clear interpretability.

Explainable AI vs. Traditional Black Box Models

To truly appreciate the value of Explainable AI, you must compare it directly against traditional black-box methodologies. This structured comparison highlights exactly why modern enterprises rapidly abandon opaque systems in favor of transparent architectures.

|

Feature |

Traditional Black Box Models |

Explainable AI Systems |

|---|---|---|

|

Decision Transparency |

Completely opaque; reasoning remains hidden from developers and users. |

Highly transparent; provides clear mathematical and logical reasoning. |

|

Regulatory Compliance |

Struggles heavily with modern privacy laws and fairness audits. |

Aligns perfectly with strict global data and algorithmic fairness mandates. |

|

Bias Detection |

extremely difficult to identify; biases remain hidden until failures occur. |

Proactively highlights biased features, allowing rapid mitigation. |

|

User Trust |

Generates high skepticism, especially in high-stakes environments. |

Builds massive confidence through clear communication and understandable logic. |

|

Debugging Efficiency |

Requires blind trial-and-error to fix underperforming models. |

Pinpoints exact mathematical errors, speeding up the development lifecycle. |

Mini-Conclusion: The Shift to Transparency

The comparison makes the industry trajectory undeniably clear. Explainable AI completely outperforms traditional black-box models across every meaningful metric regarding safety, compliance, and user confidence.

Step-by-Step Guide to Deploying Explainable AI

Deploying Explainable AI across an enterprise requires a structured, highly methodical approach. Follow this step-by-step framework to build transparent, deeply trustworthy machine learning systems successfully.

Step 1: Define the Explainability Requirements

Before writing any code, determine exactly who needs the explanation. A data scientist debugging a neural network needs a highly technical SHAP value chart. Conversely, a retail customer denied a credit card needs a simple, plain-text counterfactual explanation. Define your target audience and tailor your Explainable AI strategy to their specific comprehension level.

Step 2: Choose the Right Base Model

Evaluate whether you truly need a complex deep learning model. For many structured tabular datasets, inherently interpretable models like decision trees provide excellent accuracy. If a simpler model achieves your performance goals, use it. Only deploy complex black-box models requiring post-hoc Explainable AI when the data complexity absolutely demands it.

Step 3: Integrate Post-Hoc Frameworks

If you deploy complex neural networks or gradient boosting machines, integrate robust post-hoc Explainable AI libraries directly into your deployment pipeline. Install and configure frameworks like SHAP or LIME. Ensure these libraries run automatically alongside your main prediction models so that every output generates a corresponding explanation simultaneously.

Step 4: Establish Continuous Monitoring

Explainable AI is not a one-time deployment task. Models drift over time as real-world data changes. Establish strict model governance protocols. Continuously monitor your Explainable AI outputs to ensure the model continues focusing on logical, unbiased features rather than newly introduced noisy data.

Step 5: Audit for Algorithmic Fairness

Regularly use your Explainable AI tools to conduct rigorous fairness audits. Analyze how the model treats different demographic groups. If your Explainable AI dashboards reveal that the model uses protected attributes like age, race, or gender as primary decision drivers, halt deployment immediately and retrain the system using debiased datasets.

Mini-Conclusion: Systematic Implementation

Following this rigorous step-by-step approach ensures your Explainable AI deployment succeeds. Systematic implementation transforms abstract ethical concepts into concrete, highly functional software engineering practices.

Expert Insights and Pro Tips for Explainable AI

Industry leaders leverage specific strategies to maximize the effectiveness of Explainable AI. Apply these advanced pro tips to elevate your machine learning projects above the competition.

- Prioritize Local and Global Explanations: Expert developers utilize both local and global Explainable AI. Global explanations show how the model behaves overall across all data, while local explanations detail why the model made a specific single prediction. You need both to achieve total systemic transparency.

- Visualize the Data Effectively: Raw Explainable AI mathematical outputs confuse non-technical stakeholders. Invest heavily in exceptional UI/UX design. Transform complex SHAP values into simple, color-coded bar charts or interactive dashboards that business leaders can comprehend instantly.

- Combine Multiple Techniques: Do not rely on a single Explainable AI method. Combine LIME and counterfactual explanations to provide users with a holistic understanding of system behavior. Cross-referencing multiple techniques validates the accuracy of your explanations.

- Involve Domain Experts Early: Data scientists alone cannot determine if an explanation makes logical sense in the real world. Bring doctors, financial analysts, or legal experts into the Explainable AI evaluation process early. Their domain expertise ensures the algorithm’s logic aligns with proven human reality.

Mini-Conclusion: Elevating Engineering Standards

Implementing these advanced expert tips transitions your team from basic compliance to true technical mastery. Exceptional Explainable AI engineering secures long-term product viability and massive market respect.

Common Mistakes to Avoid in Explainable AI

Even experienced engineering teams stumble when implementing Explainable AI. Avoiding these frequent, costly mistakes protects your project timeline and preserves critical algorithmic integrity.

- Confusing Correlation with Causation: Explainable AI highlights which features drove a prediction, but it does not prove causation. If an algorithm flags a zip code as a primary driver for loan default, the zip code correlates with the risk, but it does not cause it. Treating Explainable AI outputs as absolute causal truth leads to disastrous business decisions.

- Overwhelming the End User: Generating a massive, highly technical SHAP plot for a non-technical consumer creates massive confusion. Failing to simplify Explainable AI outputs destroys user trust instantly. Always translate mathematical explanations into clear, human-readable language.

- Ignoring the Quality of Training Data: Explainable AI only explains what the model learned. If you train your model on highly biased, garbage data, Explainable AI will simply explain a highly biased, garbage decision. Explainable AI never replaces the need for pristine, carefully curated datasets.

- Treating Explainability as an Afterthought: Trying to bolt Explainable AI onto a massive, undocumented legacy system rarely works efficiently. You must design your system architecture to support Explainable AI natively from the very first day of project planning.

- Assuming Interpretability Equals Fairness: Just because you understand exactly how a model makes a decision does not automatically mean the decision is fair or ethical. You still must actively mitigate the biases that your Explainable AI tools uncover.

Mini-Conclusion: Securing Algorithmic Integrity

By proactively avoiding these common mistakes, you protect your organization from severe operational failures. Diligent, careful application of Explainable AI guarantees your systems remain both transparent and genuinely beneficial.

The Future of Explainable AI

As we look toward the horizon, Explainable AI will only grow in importance and sophistication. The rapid proliferation of massive large language models demands entirely new paradigms of transparency. Researchers actively develop advanced Explainable AI frameworks capable of interpreting billion-parameter generative models in real-time.

Furthermore, governments actively draft highly specific legislation mandating Explainable AI standards across all high-risk industries. Companies that embrace Explainable AI today future-proof their operations against tomorrow’s strict regulatory crackdowns. The industry moves aggressively toward a future where automated decisions require certified mathematical transparency. Ultimately, Explainable AI bridges the critical gap between raw computational power and human accountability, ensuring that our most advanced technologies always serve humanity safely, equitably, and transparently.

Mini-Conclusion: Preparing for Tomorrow

The evolution of machine learning absolutely depends on Explainable AI. Organizations that invest heavily in transparent infrastructures today will lead the global market tomorrow, defining the ultimate standard for ethical automated intelligence.

Conclusion

Explainable AI stands as the absolute cornerstone of modern, trustworthy machine learning ecosystems. By integrating robust transparency frameworks, automating bias detection, and engaging cross-functional domain experts, businesses build highly resilient systems. Embrace the power of Explainable AI to demystify complex algorithms, ensure strict regulatory compliance, and foster unprecedented user trust. Start auditing your machine learning models today to build a transparent, deeply accountable technological future.

Frequently Asked Questions

What exactly is Explainable AI?

Explainable AI refers to a set of sophisticated tools, techniques, and frameworks that help human users thoroughly understand and accurately interpret the complex decisions made by artificial intelligence and machine learning algorithms.

Why is Explainable AI important for business?

It guarantees strict regulatory compliance, actively prevents discriminatory algorithmic bias, dramatically accelerates model debugging processes, and builds essential trust with consumers who demand transparent, logical automated decisions.

What is the difference between global and local Explainable AI?

Global Explainable AI describes how a machine learning model makes decisions overall across an entire dataset. Local Explainable AI explains the specific mathematical reasoning behind one single, individual prediction or outcome.

How does Explainable AI help with regulatory compliance?

Laws like the GDPR demand transparency in automated decision-making. Explainable AI provides the exact, mathematically proven documentation regulators require to verify that an algorithm acts fairly, ethically, and without illegal discrimination.

What are SHAP values in Explainable AI?

SHAP represents a highly popular Explainable AI technique based on advanced game theory. It precisely calculates and assigns a specific importance value to every single feature that contributed to a model’s final prediction.

Can Explainable AI fix a bad machine learning model?

Explainable AI cannot automatically fix a bad model, but it vividly highlights exactly why the model fails. Developers use these precise insights to retrain the algorithm, adjust the data, and dramatically improve future performance.

Is Explainable AI only used for deep learning?

No. While developers heavily use Explainable AI to demystify complex deep neural networks, they also apply these techniques to interpret random forests, support vector machines, and ensemble models to guarantee absolute systemic transparency.

What is a counterfactual explanation?

A counterfactual explanation represents a highly actionable Explainable AI method. It tells a user exactly what specific data inputs they must change to receive a different, more favorable outcome from the algorithm.

Does Explainable AI slow down model performance?

Applying post-hoc Explainable AI techniques does require additional computational processing power. However, modern frameworks highly optimize this process, ensuring that the slight computational overhead never interferes with real-time operational business requirements.

How do I get started with Explainable AI?

Start by clearly defining your audience’s transparency needs. Then, evaluate your current models, implement open-source Explainable AI libraries like SHAP or LIME, and build intuitive dashboards to communicate the algorithmic logic to your stakeholders clearly.

Leave a Reply